GPT-4: Advancements in AI Language Modeling

OpenAI made an announcement on Tuesday about GPT-4, which is their next-generation AI language model. The system has several new capabilities, such as the ability to process images, and OpenAI claims that it is generally better at creative tasks and problem-solving. Although the company has cautioned that differences between GPT-4 and its predecessors are subtle in casual conversation, the system still has notable improvements.

Assessing these claims is challenging because AI models, including GPT-4, are complex and multifunctional. These systems have hidden and unknown capabilities, making fact-checking difficult. When GPT-4 creates a new chemical compound, for instance, it is necessary to verify its accuracy with actual chemists. Despite this, GPT-4 is an exciting development and is already being integrated into mainstream products.

To understand its new capabilities, we have collected examples of its abilities from news outlets, Twitter, and OpenAI’s technical report. Our team has also conducted its own tests. GPT-4 has some limitations, as OpenAI has stated, such as its tendency to “hallucinate” information and make confidently wrong predictions. However, the system is still an impressive technological AI Language advancement.

GPT-4’s ability to process images in addition to text is a significant practical improvement over its predecessors, as previously mentioned. The system is multimodal, enabling it to interpret both images and text, whereas GPT-3.5 could only handle text. GPT-4 can analyze the content of an image and relate that information to a written inquiry. Nonetheless, unlike DALL-E, Midjourney, or Stable Diffusion, GPT-4 cannot generate images.

Related: WhatsApp to Introduce Scheduled Calls Feature like Google Meet and Zoom

The New York Times demonstrated GPT-4’s ability to suggest recipes based on the contents of a fridge. GPT-4 produced multiple examples of savory and sweet dishes, but one suggestion required an ingredient that was not present: a tortilla.

GPT-4’s image processing capability has potential applications in medicine and engineering, such as analyzing X-rays and blueprints. But, accuracy in image recognition and privacy concerns with personal photos require further research and testing.

OpenAI Claims That GPT-4’s Ability To Recognize Objects

OpenAI claims that GPT-4’s ability to recognize objects and provide contextual understanding is comparable to that of a human volunteer, which is a significant advancement in the field.

While there are apps that offer basic object recognition, GPT-4 can do more than that. It can explain the world around the user, summarize cluttered webpages, and answer questions about what it “sees.” Although this functionality is not yet live, OpenAI has promised to make it available to users in a matter of weeks.

Several other companies have also been experimenting with GPT-4’s image recognition capabilities. Diagram, an AI design assistant tool, is reportedly working on integrating the technology to add features such as a chatbot that can provide feedback on designs and a tool that can assist in generating designs.

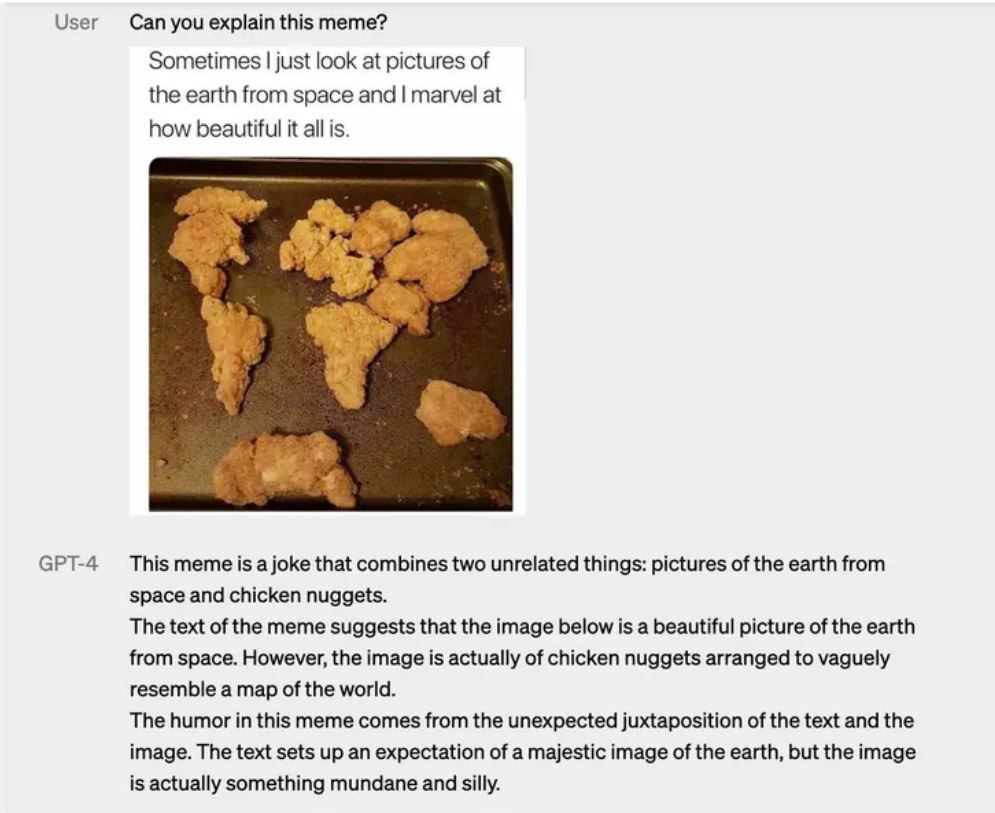

In addition, as seen in the images below, GPT-4 can also provide explanations for funny images, indicating its potential to be used in a range of applications.

[Image example here]